Quantum computers promise to outclass their classical counterparts at tasks ranging from molecule design to secure communication, yet today’s prototypes are still unreliable. Every qubit is exquisitely sensitive to stray electromagnetic fields, cosmic rays, even minute vibrations in the chip packaging. The resulting errors grow exponentially with circuit depth, washing out the delicate quantum interference that makes the whole enterprise worthwhile. The field’s most urgent quest, therefore, is not to build larger processors per se, but to engineer reliable qubits out of unreliable ones—a pursuit known as quantum error correction (QEC).

Why Quantum Errors Are Harder Than Classical Ones

Classical bits suffer only bit-flip errors (0 → 1 or 1 → 0), and can be read, copied and refreshed at will. Qubits, by contrast, can be corrupted in two independent ways: bit flips and phase flips. Worse, the no-cloning theorem forbids us from copying an unknown quantum state, so the straightforward redundancy tricks of classical error-correcting codes do not directly apply. QEC must preserve quantum superpositions without destroying them, which requires indirect, parity-based syndrome measurements and fast classical decoding.

The Threshold Theorem: Hope in Mathematics

In 1996, Aharonov and Ben-Or, followed by Knill, Laflamme and Zurek, proved the quantum threshold theorem: if the physical error rate per operation is below a critical value (the fault-tolerance threshold), arbitrarily long computations become possible at the cost of extra qubits and gates. Modern surface-code analyses put this threshold near 1 % for simple circuits and ≈0.1 % for real-world workloads. Those numbers provide the yardstick by which every hardware group now measures progress.

The Surface Code: Workhorse of the Industry

Most superconducting and spin-qubit platforms pursue the 2-D surface code, where a “logical” qubit is woven out of a checkerboard of data and measurement qubits. Periodic syndrome extraction reveals patterns (anyons) whose trajectories tell a classical decoder which physical qubits have flipped. The approach is hardware-friendly—requiring only nearest-neighbor couplings—and boasts the highest known threshold among local codes. The trade-off is heavy overhead: up to 1,000 physical qubits may be required for a single, low-error logical qubit at scale.

Recent Milestones

• 2021 – Google’s Sycamore demonstrated that increasing the distance of a surface code from 3 → 5 reduced the logical error rate, the first lab-scale proof of “scaling wins.”

• 2022 – IBM’s Eagle processor (127 qubits) ran 4-round cycles of a distance-3 code, with real-time feed-forward inside a 1 µs window.

• 2023 – Google tightened gate fidelities to ~0.1 %, pushing logical errors below physical ones for 20+ cycles and hitting an effective threshold near 2.9 × 10-3.

• 2024 – Quantinuum chained three logical qubits encoded in trapped-ion Bacon-Shor codes, achieving a 800-fold suppression of correlated phase noise.

Alternative Codes and Exotic Qubits

Not every lab bets on the surface code. At Yale, “cat” qubits store information in microwave cavity superpositions, using bosonic codes that correct single-photon loss with only one physical oscillator. NV centers, photonic cluster states and Majorana zero modes each offer tailored error models that may relax overheads. The diversity mirrors early classical computing, where vacuum tubes, transistors and magnetic cores competed before silicon CMOS emerged dominant.

Decoders: The Hidden Software Battle

Measurement syndromes arrive every microsecond and must be turned into correction operations before the next cycle. Leading candidates—minimum-weight perfect matching, tensor-network renormalization, and neural-network decoders—are all vying for nanosecond-latency ASIC implementations. A reliable quantum computer, it turns out, will also be an ultra-fast classical supercomputer sitting inches away from the cryostat.

Roadmaps and Resource Estimates

• To break today’s 2048-bit RSA key via Shor’s algorithm, theoretical estimates call for ~20 million physical superconducting qubits running for eight hours, assuming surface-code overheads.

• A practically useful error-corrected quantum advantage in chemistry might require only ~100–300 logical qubits, translating to 100k–300k physical qubits at projected error rates of 10-4.

• Industry roadmaps (IBM 2029, Google 2030) target 1 million-qubit platforms with full QEC, contingent on continued two-fold fidelity improvements every ~18 months.

The Bottlenecks Ahead

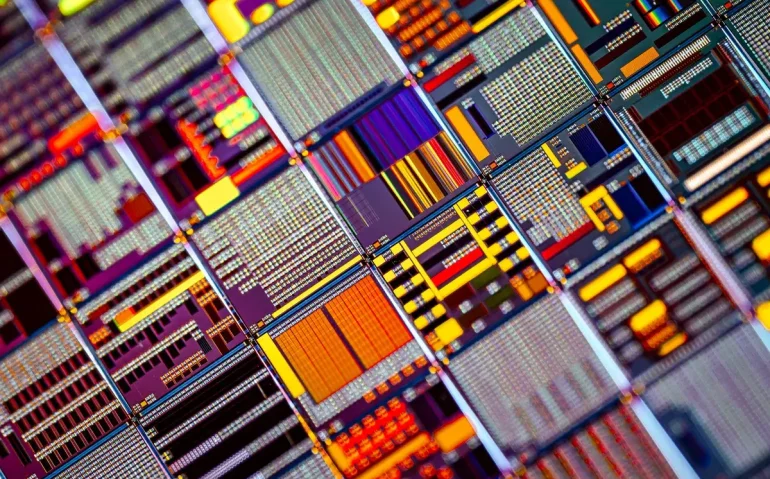

1. Fabrication yield: Defect-free chips larger than 500 qubits are still rare.

2. Cryogenic wiring: Each qubit currently demands its own microwave line; scaling to millions will require on-chip multiplexing and cryo-CMOS control.

3. Correlated noise: Cosmic-ray hits and material defects can flip dozens of qubits simultaneously, violating the local-error assumptions of existing codes.

4. Algorithmic overhead: Even with QEC, arbitrary rotations need costly T-gate distillation; new compilation techniques (e.g., lattice surgery and twist-based magic-state factories) are being developed to cut the constant factors.

Why the Progress Matters

Surpassing the error threshold is the quantum analogue of building the first transistor: a qualitative shift that unlocks engineering—rather than scientific—development. Once logical error rates fall below 10-15, software teams can write algorithms without wrestling with noise models, and the discussion moves from “if” to “how fast.” The recent experiments show the slope is positive; the next decade will reveal whether the climb is scalable.

Takeaway

Quantum error correction has moved from an abstruse theoretical construct to a measurable engineering sprint. While daunting challenges remain—materials science, cryogenics, control electronics and algorithmic overhead foremost among them—the field now has a quantitative roadmap and growing empirical evidence that the threshold theorem is within reach. The race is on, and the finish line is nothing less than fault-tolerant quantum computation.