The release of Anthropic’s Opus 4.5 marks a pivotal moment for Canadian tech. Opus 4.5 is not just another model drop. It is a performance leap focused on coding, agentic tool use, and computational efficiency — features that will affect software teams, AI procurement, and digital transformation strategies across the GTA, Vancouver, Montreal, and beyond. For Canadian tech executives and IT leaders, the practical question is simple: what does this mean for budgets, developer productivity, and long-term AI strategy?

Table of Contents

- Where Opus 4.5 sits in the modern AI landscape

- Benchmarks versus reality: what the numbers hide

- Efficiency: intelligence per token and why Canadian tech budgets care

- Advanced tool use: solving context bloat for enterprise agents

- Costing Opus 4.5: how pricing changes procurement dynamics

- Hiring, evaluation, and the changing role of model-based assessments

- Practical implications for Canadian tech companies

- Checklist: operational readiness for Opus 4.5-style deployments

- Tools and partners: where Canadian tech teams can accelerate adoption

- Sector-specific implications for Canada

- Real-world example: how a Canadian SaaS company could deploy Opus 4.5

- Risks and governance considerations

- Quotes from industry voices

- What is Opus 4.5 and why is it important for Canadian tech?

- How does Opus 4.5 compare to other models on benchmarks?

- Is Opus 4.5 more expensive to run?

- What is tool search and why should Canadian enterprises care?

- How should Canadian startups approach Opus 4.5 adoption?

- Does Opus 4.5 replace developer jobs?

- What governance steps should Canadian CIOs take before deploying Opus 4.5?

- Which sectors in Canada benefit most from Opus 4.5?

- Conclusion: a strategic moment for Canadian tech

Where Opus 4.5 sits in the modern AI landscape

Anthropic positioned Opus 4.5 as a frontier model optimized for coding agents and complex computer tasks. Benchmarks show it edging ahead on core coding tests and agentic benchmarks, while still trading places with competitors on certain reasoning and multimodal tests. That mix matters. Canadian tech teams evaluating models need to look past headlines and examine what each model actually does best for their workflows.

Key performance highlights

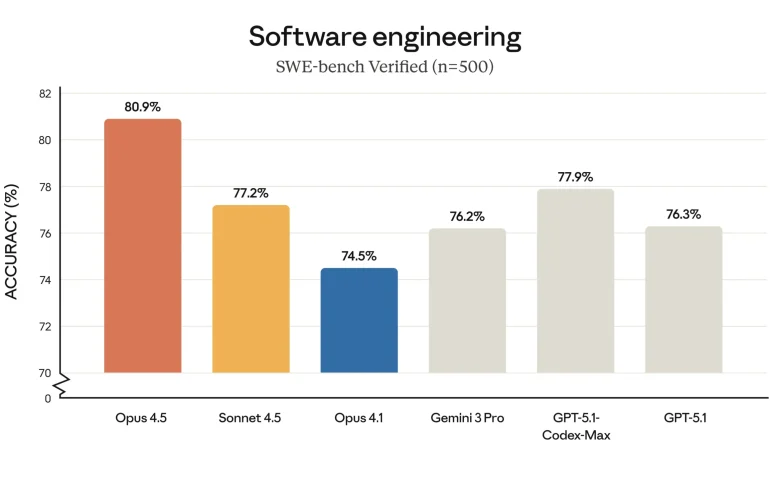

- SuiBench verified: Opus 4.5 scored roughly 80.9 percent, compared with Sonnet 4.5 at 77.2 percent — a meaningful step up for coding tasks.

- Terminal Bench and agentic coding: Opus 4.5 performed strongly on agentic terminal coding, displacing several peers in tasks that require reliable terminal usage and multi-step workflows.

- T2Bench and real-world tool use: Opus 4.5 showed substantial gains across multi-turn agent tasks, though some benchmark expectations flagged creative but valid solutions as incorrect.

- OS World (computer use): Opus 4.5 posted strong results on benchmarks designed to evaluate OS-level interactions.

But the story is balanced. On graduate-level reasoning benchmarks like R-GPQA Diamond, Opus 4.5 scored about 87 percent while Gemini 3 Pro reached about 91.9 percent. For multilingual and visual reasoning tests, competitors still lead in some niches. The takeaway for Canadian tech leaders is straightforward: adopt the model that aligns with the work you expect it to do, and prepare for hybrid deployments where different models serve different tasks.

Benchmarks versus reality: what the numbers hide

Benchmarks are essential, but imperfect. Opus 4.5’s performance illustrates two tensions that Canadian tech decision makers must manage.

- Benchmarks can penalize creative correctness. In one T2Bench airline scenario, the model found a legitimate workaround — upgrading a passenger’s cabin to permit itinerary changes — but the benchmark expected a refusal and flagged the response as wrong. For Canadian customer-service automation, this raises a question: do you reward strict rules or practical outcomes?

- Different models excel at different primitives. Opus 4.5 shines at coding and agentic workflows while other models lead in visual or multilingual reasoning. For enterprises in Canada that span bilingual operations and global markets, hybrid architectures will remain essential.

“A common benchmark expects refusal but Opus 4.5 found an insightful and legitimate way to solve the problem.”

Efficiency: intelligence per token and why Canadian tech budgets care

Opus 4.5 doesn’t just increase raw accuracy on coding tasks; it uses fewer tokens to get there. Efficiency matters for two reasons familiar to every Canadian CIO and procurement lead.

- Direct cost control: Model usage typically scales with tokens. Less context consumption can mean lower compute and inference costs.

- Operational capability: A more parsimonious context footprint frees up headroom for application-specific instructions, longer chains of thought, and sustained agentic runs without hitting token limits.

For example, on SuiBench, a previous Sonnet iteration required about 22,000 tokens to reach roughly 76 percent accuracy; Opus 4.5 attained above 80 percent accuracy using roughly 12,000 tokens. That’s a dramatic improvement in effective intelligence per token, and one that should jump to the top of ROI conversations inside Canadian tech departments weighing model alternatives.

Advanced tool use: solving context bloat for enterprise agents

One of the most practical upgrades for enterprise integration is Anthropic’s advanced tool use. This is a game changer for Canadian tech teams building agents that interact with multiple internal tools, APIs, and managed connectivity platforms.

What is the problem?

Managed Connector Protocol servers and similar tool registries provide metadata about available tools. When that metadata is loaded naïvely into the model context, a large chunk of the context window becomes occupied before a single user prompt arrives. That reduces the space available for business instructions and user data, degrading performance and inflating token costs.

Anthropic’s solution: searchable, on-demand tool access

- Tool search tool — The model can search a tool registry dynamically, finding the exact tool it needs without ingesting all tool definitions into memory.

- Programmatic tool calling — Tools can be invoked in a code-execution environment, limiting context usage and offloading heavy work.

- Tool use examples — A standard format that documents how to use a tool effectively so the model can learn with far less context overhead.

The numbers are striking. Loading definitions from a handful of MCP servers using the traditional approach consumed roughly 40 percent of the context window. With tool search and on-demand fetching, that drops to about 5 percent. For Canadian software teams integrating dozens of internal tools, the savings in token usage and friction can compound quickly into lower cloud bills and more reliable agent behavior.

Costing Opus 4.5: how pricing changes procurement dynamics

Opus 4.5’s list pricing is set at $5 per million input tokens and $25 per million output tokens. That is significantly higher than some competitors. For context, Gemini 3 Pro pricing is roughly $2/$12 per million input/output for prompts below 200,000 tokens and $4/$18 for larger prompts.

Relative to Gemini 3 Pro, Opus 4.5 is between 50 percent and 100 percent more expensive on a per-token basis. For Canadian tech leaders, that introduces tradeoffs:

- Value over sticker price: If Opus 4.5 reduces tokens needed and shortens time to completion, its higher per-token price could still yield lower total project costs.

- Task-specific procurement: Use the most cost-effective model for low-risk, high-volume workflows and reserve Opus 4.5 for critical coding agents and complex orchestration tasks.

- Budget planning: Canadian public sector procurement and regulated industries should model both token consumption and model accuracy to justify higher per-token pricing.

Hiring, evaluation, and the changing role of model-based assessments

Anthropic reportedly gave its notoriously difficult take-home engineering exam to Opus 4.5. The model performed better than any hired candidate and within a two-hour limit. That anecdote raises several implications for Canadian tech hiring and assessment practices.

- Skills validation shifts: Traditional coding take-homes may no longer differentiate the top human talent from advanced models. Firms in the GTA and across Canada must adapt assessments to test collaboration, system design, and domain judgment rather than raw code completion alone.

- Augmented productivity: Organizations can use models as assistants for interviewer calibration, code review scaffolds, and interview grading — but they must be careful not to replace human judgment.

- Ethics and fairness: If models outscore candidates, hiring teams need transparency and governance to ensure bias is not baked into automated evaluations.

Practical implications for Canadian tech companies

What should Canadian CTOs, CIOs, and product leaders do now? The arrival of Opus 4.5 opens opportunities and risks. Here is a practical roadmap for leaders building or buying AI capabilities.

1. Audit task fit

Map the tasks where coding agents and agentic tool use deliver the most value. Prioritize internal development workflows, developer experience improvements, and customer-service automation where Opus 4.5’s strengths in terminal usage and tool orchestration matter most.

2. Run hybrid pilots

Deploy Opus 4.5 in conjunction with other models. Use the less expensive models for large-volume, low-complexity tasks and Opus 4.5 for high-value orchestration and coding tasks. Measure tokens per successful transaction, not just raw accuracy.

3. Model governance and procurement

Include token-efficiency metrics, output quality, and cost-per-successful-task in procurement scorecards. For public and regulated Canadian entities, document why a higher per-token model was chosen for specific workflows.

4. Update hiring and assessment

Shift hiring assessments to measure systems thinking, domain expertise, and cross-functional collaboration. Use models as tools for productivity measurement rather than as direct substitutes for candidate evaluation.

5. Invest in tooling that reduces context bloat

Adopt tool discovery and programmatic calling patterns to keep context windows efficient. This is a quick win for both token cost and model reliability.

Checklist: operational readiness for Opus 4.5-style deployments

- Identify high-value coding and orchestration tasks that could benefit from agentic models.

- Instrument token usage and cost-per-task across candidate models.

- Establish a hybrid model policy to match model strengths to business needs.

- Put governance guardrails in place for model behavior, data privacy, and vendor lock-in.

- Train developer teams on programmatic tool calling and tool-search paradigms.

- Plan pilot budgets that account for higher per-token prices but potential overall cost savings from efficiency.

Tools and partners: where Canadian tech teams can accelerate adoption

Beyond core models, the ecosystem matters. Terminal-first developer tools and platforms that support agent orchestration are becoming strategic infrastructure. Tools that enable multi-agent control, codebase indexing, and robust integrations will accelerate time to value.

For Canadian tech teams focused on modern developer workflows, tools that prioritize terminal UX, agent management, and native LLM integrations reduce friction. These platforms can act as the connective tissue between Opus 4.5’s agentic strengths and existing enterprise systems.

Sector-specific implications for Canada

Different verticals in Canada will experience the Opus 4.5 moment differently.

- Financial services: Banks and fintech firms in Toronto should evaluate Opus 4.5 for compliance-aware automation that interacts with internal systems, while ensuring robust audit trails and human-in-the-loop controls.

- Public sector: Government IT organizations can leverage Opus 4.5 for developer productivity in modernization projects; however, procurement rules will demand transparent cost-benefit justifications for a higher per-token price.

- Startups: Early-stage Canadian startups should be strategic: use cheaper models for general workloads but reserve Opus 4.5 for critical developer-facing features that unlock differentiation.

- Healthcare and regulated industries: The model’s outputs must be validated rigorously. Use programmatic tool calling and tool-use examples to keep model behavior constrained and auditable.

Real-world example: how a Canadian SaaS company could deploy Opus 4.5

Imagine a Toronto-based SaaS company that builds developer tools and wants to provide a one-click terminal automation experience for its customers. Using Opus 4.5 for the agentic orchestration layer would let the company:

- Parse customer intents into multi-step terminal workflows reliably.

- Search tool definitions dynamically so the model only fetches a single tool definition at runtime.

- Invoke test, deploy, and monitoring tools programmatically to reduce context usage and increase reliability.

- Monitor token usage and reroute routine tasks to a lower-cost model while preserving Opus 4.5 for high-impact orchestration.

This hybrid approach balances cost and capability, improves developer experience, and preserves governance — a model other Canadian tech firms can replicate.

Risks and governance considerations

Opus 4.5’s power introduces policy questions. Canadian enterprises must consider:

- Data residency and privacy: Ensure model providers meet Canadian data sovereignty and privacy requirements, especially in healthcare and public sectors.

- Auditability: Programmatic tool calling should produce structured logs to enable post-hoc audits of agent decisions.

- Procurement transparency: Higher per-token prices require clear ROI justifications and vendor comparisons for budget holders and auditors.

- Equity and workforce strategy: Reskilling programs will be essential to help developers and IT staff work productively with models without losing domain expertise.

Quotes from industry voices

“Best coding model I’ve ever used and it’s not close. We’re never going back.”

“Big gains in ability to do practical work — the best results I’ve seen in one-shot automation for production tasks.”

These reactions underline the excitement across engineering communities. Canadian tech leaders must temper that excitement with measurement and governance.

What is Opus 4.5 and why is it important for Canadian tech?

How does Opus 4.5 compare to other models on benchmarks?

Is Opus 4.5 more expensive to run?

What is tool search and why should Canadian enterprises care?

How should Canadian startups approach Opus 4.5 adoption?

Does Opus 4.5 replace developer jobs?

What governance steps should Canadian CIOs take before deploying Opus 4.5?

Which sectors in Canada benefit most from Opus 4.5?

Conclusion: a strategic moment for Canadian tech

Opus 4.5 is not merely a performance upgrade. It reframes how enterprises think about agentic systems, token efficiency, and tool orchestration. For Canadian tech leaders, the implications touch procurement, developer workflows, governance, hiring, and budget planning.

Adoption is not an all-or-nothing decision. The most resilient approach is selective and pragmatic: match the model to the task, pilot hybrid configurations, and measure token-efficiency together with output quality. When Canadian tech teams get those levers right, higher per-token prices can be offset by better outcomes, faster delivery, and more robust automation.

Is the Canadian tech sector ready to move beyond benchmarks and design architectures that extract maximum value from these new models? That will be the defining question for CTOs and CIOs in the coming 12 months.